Swift Regex Deep Dive

iOS MacOur introductory guide to Swift Regex. Learn regular expressions in Swift including RegexBuilder examples and strongly-typed captures.

Update 03/06/18 (The Quartz API has undergone some radical changes over the years. We’re updating our popular Core Graphics series to work well with the current version of Swift, so here is an update to the first installment.)

Mac and iOS developers have a number of different programming interfaces to get stuff to appear on the screen. UIKit and AppKit have various image, color and path classes. Core Animation lets you move layers of stuff around. OpenGL lets you render stuff in 3-space. SpriteKit lets you animate. AVFoundation lets you play video.

Core Graphics, also known by its marketing name “Quartz,” is one of the oldest graphics-related APIs on the platforms. Quartz forms the foundation of most things 2-D. Want to draw shapes, fill them with gradients and give them shadows? That’s Core Graphics. Compositing images on the screen? Those go through Core Graphics. Creating a PDF? Core Graphics again.

CG (as it is called by its friends) is a fairly big API, covering the gamut from basic geometrical data structures (such as points, sizes, vectors and rectangles) and the calls to manipulate them, stuff that renders pixels into images or onto the screen, all the way to event handling. You can use CG to create “event taps” that let you listen in on and manipulate the stream of events (mouse clicks, screen taps, random keyboard mashing) coming in to the application.

OK. That last one is weird. Why is a graphics API dealing with user events? Like everything else, it has to do with History. And knowing a bit of history can explain why parts of CG behave like they do.

Back in the mists of time (the 1980s, when Duran Duran was ascendent), graphics APIs were pretty primitive compared to what we have today. You could pick from a limited palette of colors, plot individual pixels, lay down lines and draw some basic shapes like rectangles and ellipses. You could set up clipping regions that told the world, “Hey, don’t draw here,” and sometimes you had some wild features like controling how wide lines could be. Frequently there were “bit-blitting” features for copying blocks of pixels around. QuickDraw on the Mac had a cool feature called regions that let you create arbitrarily-shaped areas and use them to paint through, clip, outline or hit-test. But in general, APIs of the time were very pixel oriented.

In 1985, Apple introduced the LaserWriter, a printer that contained a microprocessor that was more powerful than the computer it was hooked up to, had 12 times the RAM, and cost twice as much. This printer produced (for the time) incredibly beautiful output, due to a technology called PostScript.

PostScript is a stack-based computer language from Adobe that is similar to FORTH. PostScript, as a technology, was geared for creating vector graphics (mathematical descriptions of art) rather than being pixel based. An interpreter for the PostScript language was embedded in the LaserWriter so when a program on the Mac wanted to print something, the program (or a printer driver) would generate program code that was downloaded into the printer and executed.

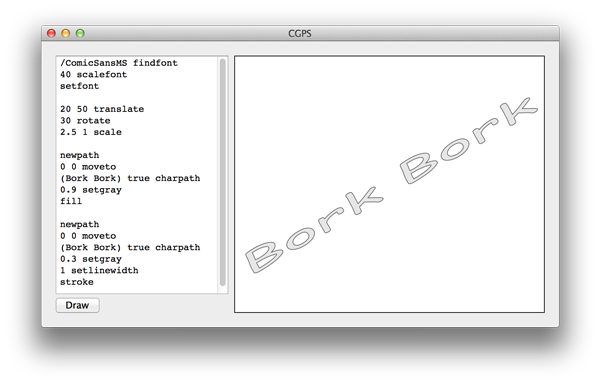

Here’s an example of some PostScript code and the resulting image:

You can find this project over on Github.

Representing the page as a program was a very important design decision. This allowed the program to represent the contents of the page algorithmically, so the the device that executed the program would be able to draw the page at its highest possible resolution. For most printers at the time, this was 300dpi. For others, 1200dpi. All from the same generated program.

In addition to rendering pages, PostScript is Turing-complete, and can be treated as a general-purpose programming language. You could even write a web server.

When the NeXT engineers were designing their system, they chose PostScript as their rendering model. Display PostScript, a.k.a. DPS, extended the PostScript model so that it would work for a windowed computer display. Deep in the heart of it, though, was a PostScript interpreter. NeXT applications could implement their screen drawing in PostScript code, and use the same code for printing. You could also wrap PostScript in C functions (using a program called pswrap) to call from application code.

Display PostScript was the foundation of user interaction. Events (mouse, keyboard, update, etc.) went through the DPS system and then were dispatched to applications.

NeXT wasn’t the only windowing system to use PostScript at the time. Sun’s NeWS (capitalization aside, no relation to NeXT) had an embedded PostScript interpreter that drove the user’s interaction with the system.

Why don’t OS X and iOS use Display PostScript? Money, basically. Adobe charged a license fee for Display PostScript. Also, Apple is well known for wanting to own as much of their technology stack as possible. By implementing the PostScript drawing model, but not actually using PostScript, they could avoid paying the license fees and also own the core graphics code.

It’s commonly said that Quartz is “based on” PDF, and in a sense that’s true. PDF (Adobe’s Portable Document Format) is the PostScript drawing model without the arbitrary programmability. Quartz was designed that the typical use of the API would map very closely to what PDF supports, making the creation of PDFs nearly trivial on the platform.

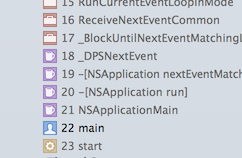

The same basic mechanisms were kept, even though Display PostScript was replaced by Quartz, including the event handling. Check out frame 18 from this Cocoa stack trace. DPS Lives!

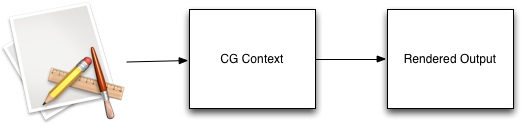

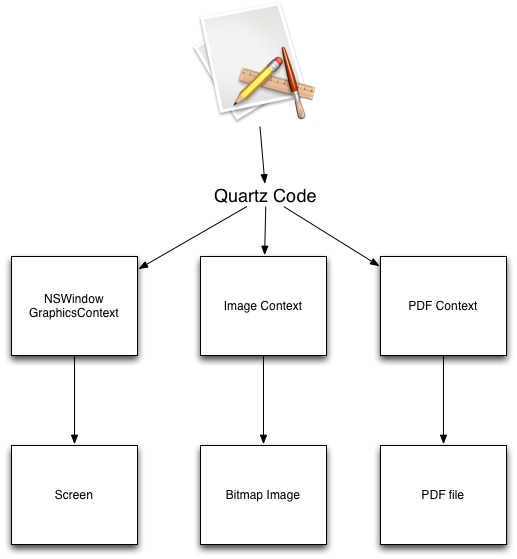

I’ll be covering more aspects in Quartz in detail in the coming weeks, but one of the big take-aways is that the code you call to “draw stuff” is abstracted away from the actual rendering of the graphics. “Render” here could be “make stuff appear in an NSView,” or “make stuff appear in a UIImage,” or even “make stuff appear in a PDF.”

All your CG drawing calls are executed in a “context,” which is a collection of data structures and function pointers that controls how the rendering is done.

There are a number of different contexts, such as (on the Mac) NSWindowGraphicsContext. This particular context takes the drawing commands issued by your code and then lays down pixels in a chunk of shared memory in your application’s address space. This memory is also shared with the window server. The window server takes all of the window surfaces from all the running applications and layers them together onscreen.

Another CG context is an image context. Any drawing code you run will lay down pixels in a bitmap image. You can use this image to draw into other contexts or save to the file system as a PNG or JPEG. There is a PDF context as well. The drawing code you run doesn’t turn into pixels; instead it turns into PDF commands and is saved to a file. Later on, a PDF viewer (such as Adobe Acrobat or Mac Preview) can take those PDF commands and render them into something viewable.

Next time, a closer look at contexts, and some of the convenience APIs layered over Core Graphics.

Our introductory guide to Swift Regex. Learn regular expressions in Swift including RegexBuilder examples and strongly-typed captures.

The Combine framework in Swift is a powerful declarative API for the asynchronous processing of values over time. It takes full advantage of Swift...

SwiftUI has changed a great many things about how developers create applications for iOS, and not just in the way we lay out our...